本文作者:程序员飞云

仅作为学习参考

引入依赖

<dependency> <!-- jsoup HTML parser library @ https://jsoup.org/ --> <groupId>org.jsoup</groupId> <artifactId>jsoup</artifactId> <version>1.15.3</version> </dependency>1. 爬取bing的图片

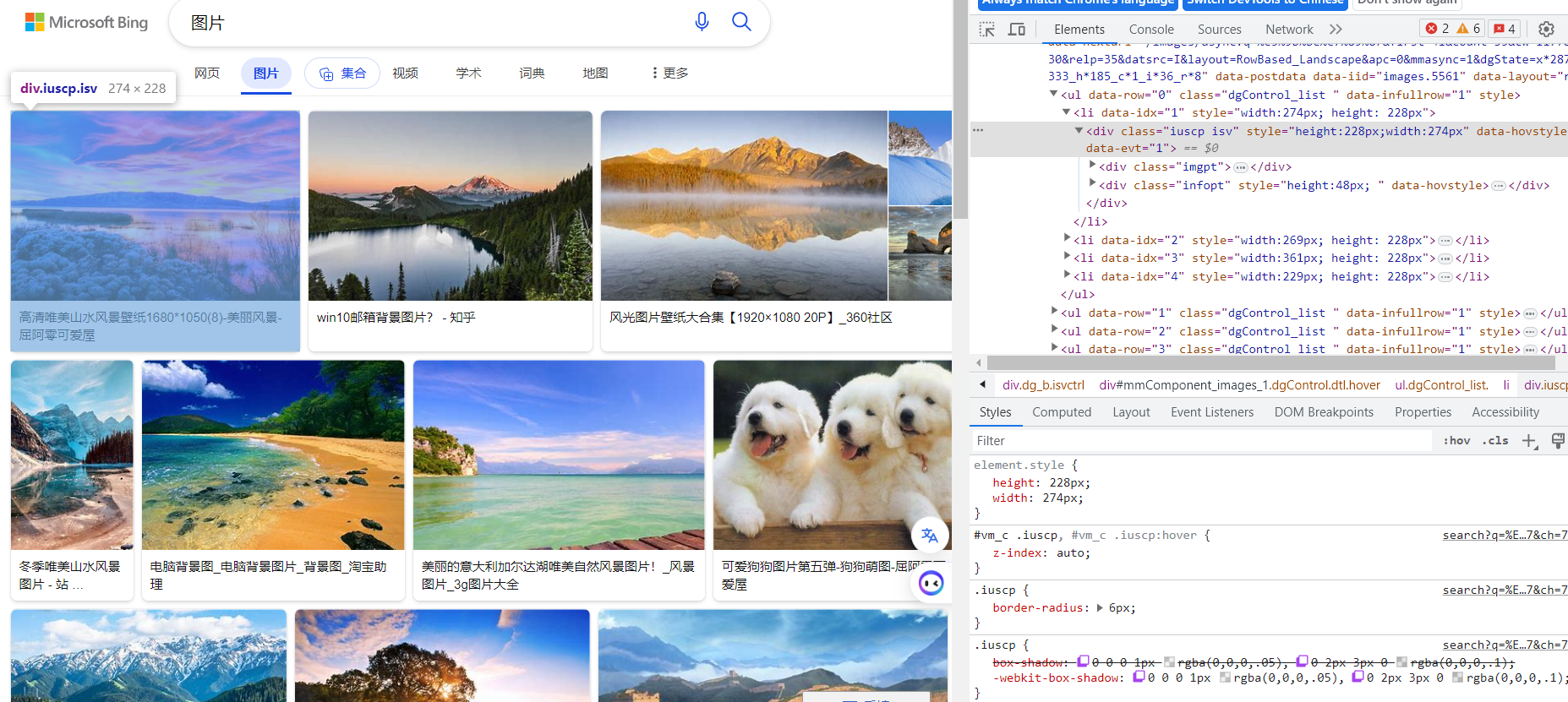

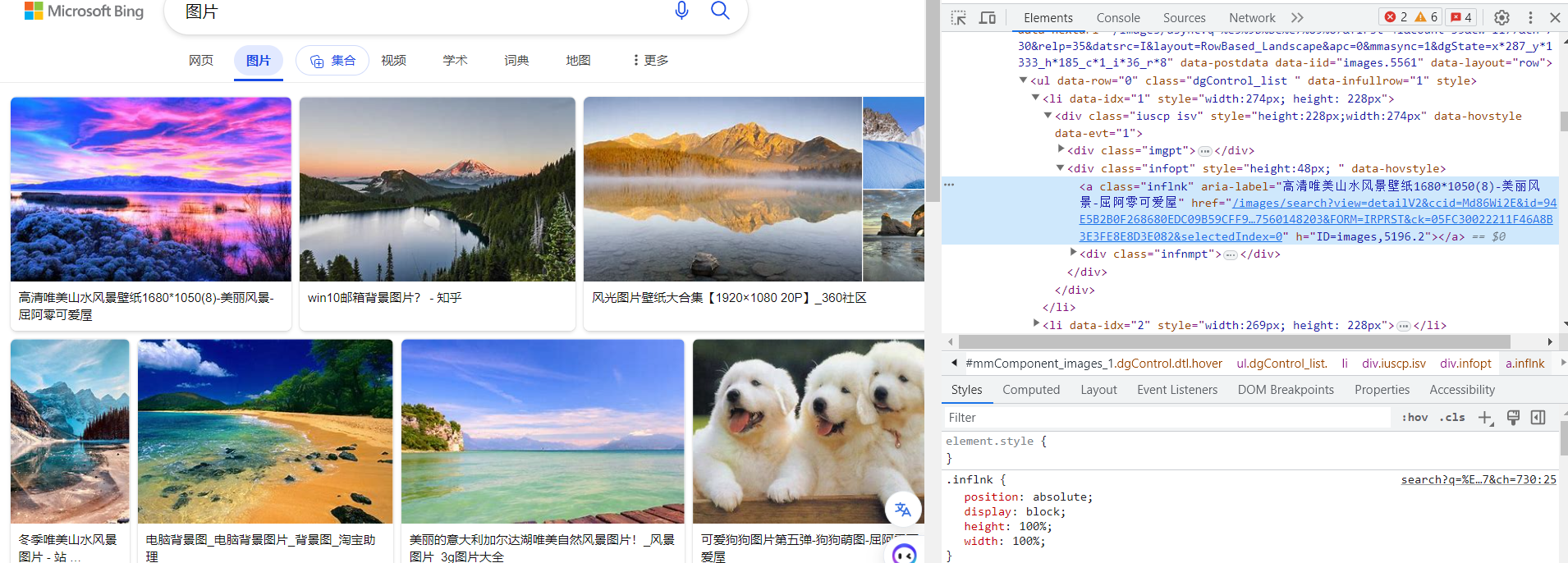

第一步先找到bing的每个图片对应的标签

1.1 先写一个请求类

用于发送json请求

@Datapublic class RandomPictureRequest { private String searchText;}1.2 实体类

用于存储图片信息,包含url和标题

@Datapublic class Picture implements Serializable { private static final long serialVersionUID = 3946514657018193905L; private String title; private String url; }1.3 controller编写

这里我使用了自定义的返回类,可以直接使用String返回,最后结果直接返回list.get(0) 这里面的pageSize是一个随机页数,保证能够获取到随机的照片,也可以自己指定。

@RequestMapping( "/picture" )@RestControllerpublic class PictureController {

/** * 随机抓取必应上的图片 * * @param randomPicture * @return */ @PostMapping( "/randomPicture" ) public BaseResponse<Picture> randomPicture(@RequestBody RandomPictureRequest randomPicture) { if (randomPicture == null) { throw new BusinessException(ErrorCode.PARAMS_ERROR); } // 获取搜索文本 String searchText = randomPicture.getSearchText(); int pageSize ; Random random = new Random(); pageSize = random.nextInt(10); String url = String.format("https://www.bing.com/images/search?q=%s&first=%s", searchText, pageSize); // 发送请求 Document doc = null; try { doc = Jsoup.connect(url) .header("Referer", "https://www.bing.com/") .timeout(1000) .get(); } catch (IOException e) { throw new RuntimeException("输入错误"); }

List<Picture> list = new ArrayList<>(); // 获取标签 Elements select = doc.select(".iuscp.isv "); for (Element element : select) { // 图片地址 String s = element.select(".iusc").get(0).attr("m"); Map map = JSONUtil.toBean(s, Map.class);

String murl = (String) map.get("murl"); // 取标题 String title = element.select(".inflnk").get(0).attr("aria-label"); Picture picture = new Picture(); picture.setTitle(title); picture.setUrl(murl); list.add(picture); } // 返回第一个值 return ResultUtils.success(list.get(0)); }目前的不足,对于有防盗链的照片无法展示

2. 爬取豆瓣的热门电影

String url = "https://movie.douban.com/"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); }

Elements elements = document.select("li.ui-slide-item"); for (Element element : elements) { // 标题 String title = element.attr("data-title"); // 发行时间 String release = element.attr("data-release"); // 评分 String rate = element.attr("data-rate"); // 主演 String star = element.attr("data-star"); // 地址 String trailer = element.attr("data-trailer"); // 卖票地址 String ticket = element.attr("data-ticket"); // 时长 String duration = element.attr("data-duration"); // 发行区域 String region = element.attr("data-region"); // 导演 String director = element.attr("data-director"); // 演员 String actors = element.attr("data-actors");

System.out.println("电影名字:" + title); System.out.println("发行时间" + release); System.out.println("评分"+rate); System.out.println("地址"+trailer); System.out.println("时长"+duration); System.out.println("发行地区"+region); System.out.println("主演"+actors); }3. 豆瓣最受关注

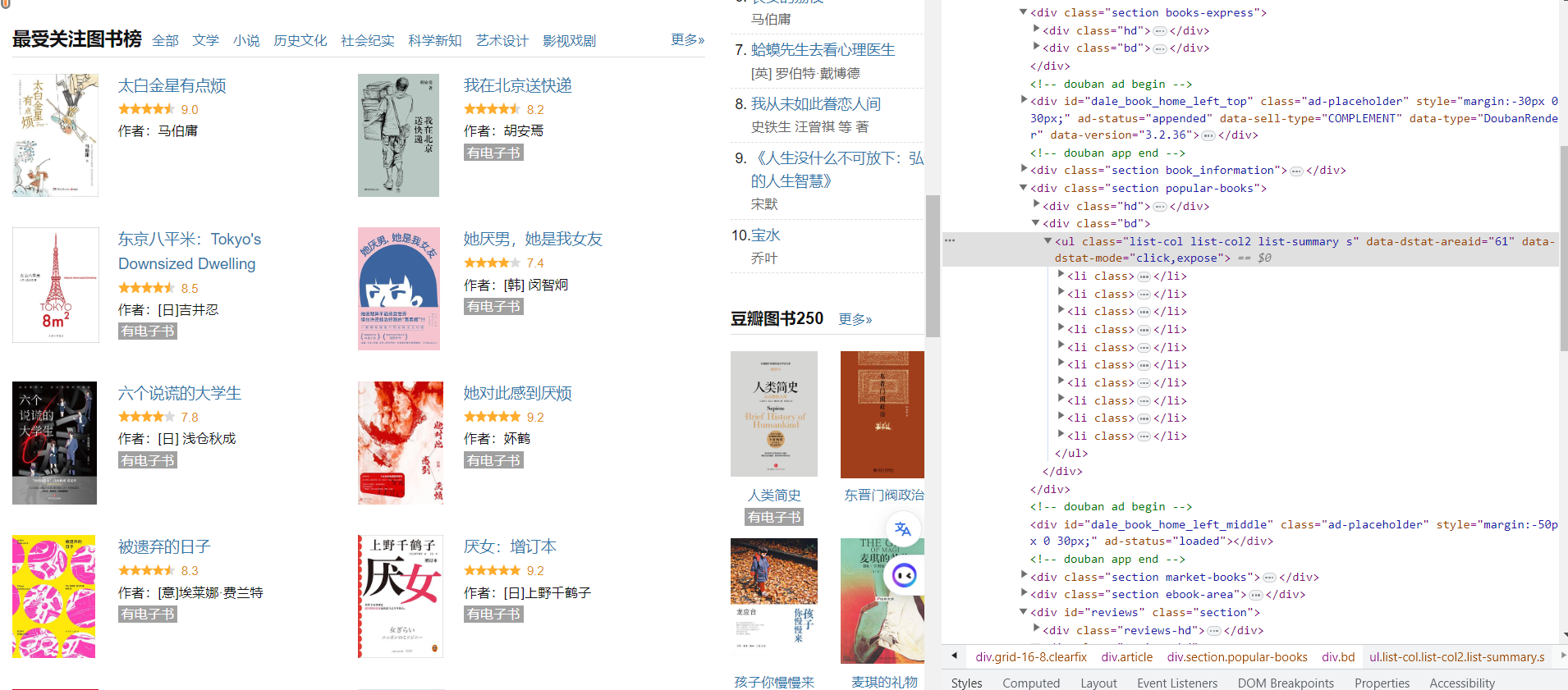

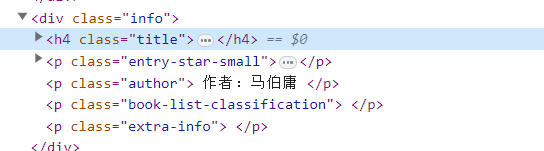

先找到整个布局的部分,list-col2,里面存储着相应的元素标签

标题通过h4.title里面的a标签获取 作者直接通过p.author获取 里面的评分需要两层获取,先获取p标签,然后获取limian的span标签p.entry-star-small span.average-rating

public BaseResponse<String> famousDouBanBook(){ String url = "https://book.douban.com/"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); } System.out.println(document); Elements elements = document.select("ul.list-col2 li"); for (Element element : elements) { String title = element.select("h4.title a").text(); String coverUrl = element.select("div.cover img").attr("src"); String rate = element.select("p.entry-star-small span.average-rating").text();; String author = element.select("p.author").text();

System.out.println("书名:" + title); System.out.println("封面:" + coverUrl); System.out.println("评分:" + rate); System.out.println("作者:" + author); System.out.println("------------------------"); }

return ResultUtils.success("ok"); }4. 99健康网

地址 https://yqk.99.com.cn/yq/type/list-300-9.html

public static void main(String[] args) { String url = "https://yqk.99.com.cn/yq/type/list-300-9.html"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); } Elements elements = document.select("div.list_left > div"); List<CrawlCompanyInfo> companyInfoList = new ArrayList<>();

for (Element element : elements) { String companyName = element.select("div.pro_title a").text(); String companyPhoneNumber = element.select("div.pro_text span.text01:contains(联系电话)").text(); companyPhoneNumber = companyPhoneNumber.replace("[联系电话]:", "");

CrawlCompanyInfo companyInfo = new CrawlCompanyInfo(); companyInfo.setCompanyName(companyName); companyInfo.setPhoneNum(companyPhoneNumber); companyInfoList.add(companyInfo); }

for (CrawlCompanyInfo companyInfo : companyInfoList) { System.out.println("Company Name: " + companyInfo.getCompanyName()); System.out.println("Phone Number: " + companyInfo.getPhoneNum()); System.out.println("-------------------"); }

}修改,既然数据是聚合在一起,然就可以采用hashmap存储对应的元素,然后已间隔分开存储

public static void main(String[] args) { String url = "https://yqk.99.com.cn/yq/type/list-300-9.html"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); } Elements elements = document.select("div.list_left > div"); List<HashMap<String, String>> companyInfoList = new ArrayList<>();

for (Element element : elements) { String companyName = element.select("div.pro_title a").text(); String companyPhoneNumber = element.select("div.pro_text span.text01:contains(联系电话)").text(); companyPhoneNumber = companyPhoneNumber.replace("[联系电话]:", "");

HashMap<String, String> map = new HashMap<>(); map.put("companyName", companyName); map.put("phoneNum", companyPhoneNumber); companyInfoList.add(map); }

HashMap<String, String> map = companyInfoList.get(0); String companyName = map.get("companyName"); String phoneNum = map.get("phoneNum"); String[] companyNames = companyName.split(" "); String[] phoneNums = phoneNum.split(" ");

List<CrawlCompanyInfo> crawlCompanyInfoList = new ArrayList<>(); for (int i = 0; i < companyNames.length; i++) { CrawlCompanyInfo companyInfo = new CrawlCompanyInfo(); companyInfo.setCompanyName(companyNames[i]); companyInfo.setPhoneNum(phoneNums[i]); crawlCompanyInfoList.add(companyInfo); }

for (CrawlCompanyInfo companyInfo : crawlCompanyInfoList) { System.out.println("Company Name: " + companyInfo.getCompanyName()); System.out.println("Phone Number: " + companyInfo.getPhoneNum()); System.out.println("-------------------"); }

}5. 爬取99网站药店公司信息

public static void main(String[] args) { String url = "https://ypk.99.com.cn/yaodian/"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); } Elements elements = document.select("dl");

List<CrawlSaleInfo> list = new ArrayList<>(); for (Element element : elements) { String saleName = element.select("div.pmy-name b a").text(); Elements phoneElements = element.select("div.pmy-name p"); String phoneNumber = phoneElements.size() > 0 ? phoneElements.get(0).text().replace("联系电话:", "") : "";

CrawlSaleInfo crawlSaleInfo = new CrawlSaleInfo(); crawlSaleInfo.setPhoneNum(phoneNumber); crawlSaleInfo.setSaleName(saleName); list.add(crawlSaleInfo); }

list.stream() .filter(crawlSaleInfo -> !crawlSaleInfo.getSaleName().isEmpty() && !crawlSaleInfo.getPhoneNum().isEmpty()) .forEach(System.out::println);

}6. 爬取99网站药品信息

public static void main(String[] args) { String url = "https://ypk.99.com.cn/"; Document document = null; try { document = Jsoup.connect(url).get(); } catch (IOException e) { e.printStackTrace(); } Elements elements = document.select("div.wrap2-right ul li");

for (Element element : elements) { String title = element.select("a.wrap2-name").attr("title"); String src = element.select("img").first().attr("src"); String paragraph = element.select("p").text();

System.out.println("Title: " + title); System.out.println("Src: " + src); System.out.println("Paragraph: " + paragraph); System.out.println("--------------------"); } }

评论